Overshoot: Real-Time Vision AI for Developers

In an era where artificial intelligence is steadily moving beyond text and into the physical world, Overshoot has emerged as a startup determined to redefine how machines see, interpret, and respond to reality in real time. Founded in 2025 and part of the Winter 2026 batch, the San Francisco–based company is building an AI-native platform that enables developers to create and deploy real-time vision applications with unprecedented speed and simplicity. With a compact team of two founders and backing from primary partner Jon Xu, Overshoot represents a new wave of highly specialized infrastructure startups focused on solving the bottlenecks that arise when AI meets the physical environment.

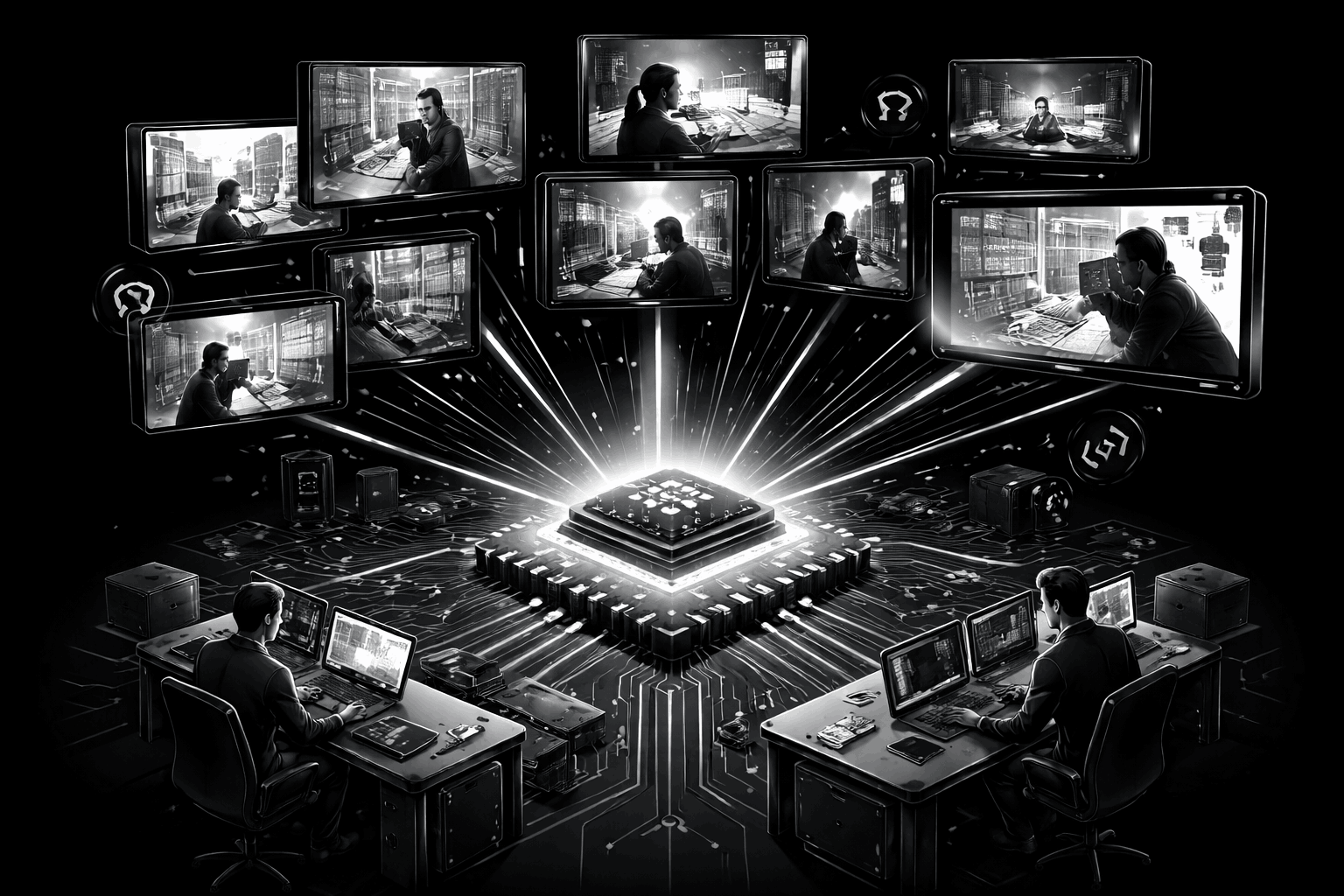

The significance of Overshoot’s mission lies in a fundamental shift in artificial intelligence itself. While earlier breakthroughs centered on language models that process text, the next frontier involves systems that can perceive the world visually — through images, video streams, and sensor data. This capability unlocks applications across physical security, safety monitoring, gaming, robotics, smart homes, and consumer products. Soon, intelligent video agents may watch over homes, monitor pets, assist elderly family members, or coordinate autonomous machines. Overshoot positions itself as the enabling layer that makes these possibilities practical for developers rather than theoretical.

Why Has Building Real-Time Vision Applications Been So Difficult?

Despite rapid progress in AI research, developers attempting to build real-time vision systems have faced a frustrating landscape. Existing platforms often struggle with slow inference speeds, limited model availability, and infrastructure that fails under scale. Processing video in real time requires enormous computational efficiency because delays of even a few hundred milliseconds can make an application unusable in safety-critical or interactive contexts.

Traditional computer vision solutions also lacked the “general intelligence” now associated with large language models. Earlier systems were typically trained for narrow tasks — recognizing faces, detecting objects, or tracking movement — but could not interpret scenes holistically or respond dynamically to new situations. Developers frequently encountered fragmented toolchains, unreliable streaming pipelines, and hardware constraints that made deployment expensive and complex.

Overshoot identifies this gap as both technical and conceptual. The startup argues that image and video data are fundamentally different modalities from text and therefore require dedicated infrastructure rather than adaptations of language-focused systems. Without purpose-built pipelines — from codecs and streaming protocols to inference engines — real-time vision will remain constrained by bottlenecks.

How Does Overshoot Solve the Real-Time Vision Bottleneck?

Overshoot’s core value proposition is deceptively simple: make real-time vision as easy to build as modern web applications. According to the company, developers can connect live video feeds to a vast collection of Vision Language Models using just three lines of code. Once connected, the system delivers responses in under 200 milliseconds — reportedly ten times faster than existing inference platforms and faster than human reaction time.

This performance is achieved through an end-to-end approach that optimizes every layer of the stack. Rather than relying on generic infrastructure, Overshoot focuses exclusively on image and video processing. The platform integrates streaming protocols, compression techniques, and specialized inference engines designed for continuous visual input. By controlling the pipeline from capture to interpretation, the startup reduces latency and eliminates many of the compatibility issues that plague multi-vendor solutions.

Equally important is the removal of infrastructure headaches. Developers using Overshoot do not need to manage complex GPU clusters, scaling strategies, or data pipelines. The platform abstracts these challenges into an API that allows teams to focus on building applications rather than maintaining systems. For startups and independent developers, this reduction in operational complexity could dramatically accelerate experimentation and product development.

What Makes Overshoot’s Technology Distinctive?

Overshoot’s technological differentiation stems from its singular focus on visual data. The company argues that most AI infrastructure has evolved around text processing, leaving vision applications underserved. By concentrating exclusively on images and video, Overshoot claims it can make technical leaps that general-purpose platforms cannot.

The startup’s innovations span multiple layers. At the data level, it addresses the challenges of video compression and streaming, ensuring that high-quality feeds can be transmitted efficiently. At the processing level, it deploys optimized inference engines capable of handling continuous visual input without delays. At the model level, it provides access to a large collection of Vision Language Models, allowing developers to choose the most appropriate model for their use case.

This specialization creates what the company describes as its “moat.” By mastering the nuances of visual data — including bandwidth constraints, frame synchronization, and temporal context — Overshoot can deliver performance improvements that are difficult for competitors to replicate quickly. In a field where milliseconds matter, such optimization could become a decisive advantage.

Who Are the Founders Behind Overshoot?

Overshoot’s story is also a story of family collaboration and complementary expertise. The startup was founded by cousins Younes El Hjouji and Zakaria El Hjouji, both of whom bring deep experience in large-scale systems and artificial intelligence infrastructure.

Zakaria El Hjouji, the CEO, graduated at the top of his class at both the London School of Economics and MIT. His professional background includes building low-latency, high-throughput pricing systems at Uber — the technology behind surge pricing — and developing inference engines at Meta AI. He has also written GPU kernels, won several prominent AI hackathons, and built and sold a software product during graduate school. His interests center on inference optimization and video understanding, making him particularly suited to tackling the challenges of real-time AI.

Younes El Hjouji, the CTO, previously served as a founding engineer at Cosmonio, a company later acquired by Intel. At Intel, he worked on computer vision AI frameworks and built a training and serving platform for vision models from the ground up. Through this work, he observed customers abandoning traditional computer vision tools because they lacked the general intelligence emerging from language models. This firsthand insight shaped Overshoot’s direction toward Vision Language Models that combine perception with reasoning.

Together, the founders have shipped large-scale systems and witnessed where they fail under real-world conditions. Their combined experience at Uber, Meta, and Intel provides credibility in an industry where performance claims must be backed by engineering rigor.

How Are Developers Using Overshoot Today?

Adoption metrics suggest that Overshoot is gaining traction among developers eager to experiment with real-time vision. More than 300 developers initially connected live video feeds to the platform, and the company reports that over 1,000 developers are now using it to ship video agents across industries such as gaming, robotics, and security.

In gaming, real-time vision can enable AI characters that perceive and react to player behavior dynamically. In robotics, it allows machines to interpret complex environments without preprogrammed scripts. In security, video agents can monitor spaces continuously, detect anomalies, and respond instantly to threats. Consumer applications may include smart home systems that recognize situations rather than merely detecting motion.

These use cases illustrate a broader shift from passive monitoring to active understanding. Instead of simply recording video, systems built on Overshoot can interpret events, generate alerts, and even take automated actions. Such capabilities could redefine how humans interact with technology in physical spaces.

What Could Real-Time Vision Mean for the Future of AI?

Overshoot’s ambitions extend beyond developer convenience. The company envisions a future in which AI systems seamlessly observe and interpret the physical world, enabling safer cities, smarter infrastructure, and more responsive products. Real-time vision could transform industries ranging from healthcare — where AI monitors patients — to transportation, manufacturing, and entertainment.

However, this future also raises questions about privacy, ethics, and governance. Systems capable of constant visual monitoring must be designed responsibly to prevent misuse. Overshoot’s role as infrastructure provider places it at the center of these discussions, as the capabilities it enables will shape how society adopts visual AI.

From a technological perspective, real-time vision represents the convergence of multiple AI disciplines: perception, reasoning, and action. Overshoot’s platform aims to provide the connective tissue that allows these components to operate together efficiently. If successful, it could accelerate the transition from experimental prototypes to production-ready applications.

Why Is Overshoot Positioned to Succeed?

Several factors contribute to Overshoot’s potential for success. First is timing: the surge in Vision Language Models has created demand for infrastructure that can support them. Second is specialization: by focusing exclusively on image and video modalities, the company avoids dilution of effort. Third is the founders’ experience in building systems that operate at scale and low latency.

Additionally, Overshoot’s developer-centric approach aligns with how successful infrastructure companies grow. By making integration simple and eliminating operational barriers, the platform encourages experimentation. Developers who build prototypes today may become enterprise customers tomorrow.

The startup’s small team size also reflects a modern trend in AI entrepreneurship, where highly skilled founders leverage advanced tools to achieve outsized impact. Rather than scaling headcount immediately, Overshoot appears focused on refining its core technology and building a loyal developer community.

What Lies Ahead for Overshoot?

As Overshoot continues to evolve, its trajectory will depend on several factors: adoption by major enterprises, expansion of supported models, and the ability to maintain performance as usage scales. Partnerships with hardware providers, robotics companies, or security firms could accelerate growth and validate the platform’s capabilities in real-world deployments.

The broader AI landscape is becoming increasingly competitive, with major technology companies investing heavily in multimodal systems. Overshoot’s challenge will be to maintain its speed advantage and developer friendliness while expanding features. If it can do so, the startup may become a foundational layer for applications that require machines to see and understand the world instantly.

Ultimately, Overshoot represents a pivotal moment in artificial intelligence’s evolution from language-centric systems to perception-driven intelligence. By enabling developers to build real-time vision applications without prohibitive complexity, the company is not merely improving existing workflows — it is opening the door to entirely new categories of products and services. Whether monitoring homes, guiding robots, or enhancing entertainment experiences, the applications built on Overshoot’s platform may soon become an integral part of everyday life.