Stack AI: No-Code Platform for Building AI Applications

In today's rapidly evolving technological landscape, harnessing the power of artificial intelligence (AI) has become a crucial aspect for businesses across various industries. However, developing AI applications can be a complex and time-consuming task, often requiring specialized knowledge and expertise. Enter Stack AI, a groundbreaking startup that aims to democratize AI application development by providing a user-friendly platform that allows teams to build and deploy applications with Large Language Models (LLMs) in a matter of minutes. In this article, we will explore the key features and benefits of Stack AI, its visionary founders, and the diverse range of applications it enables.

Founders Driving Innovation

Stack AI was co-founded by two visionary individuals, Bernardo Aceituno and Toni Rosinol, both of whom bring a wealth of experience and expertise to the table. Bernardo Aceituno, a PhD holder from MIT with a background in AI and optimization algorithms, serves as a driving force behind Stack AI's technological advancements. His deep understanding of AI and optimization algorithms provides the foundation for the platform's robust functionality. Toni Rosinol, also a PhD graduate from MIT, complements the team with his expertise in AI and contributes to the company's strategic vision.

Unleashing the Power of Stack AI

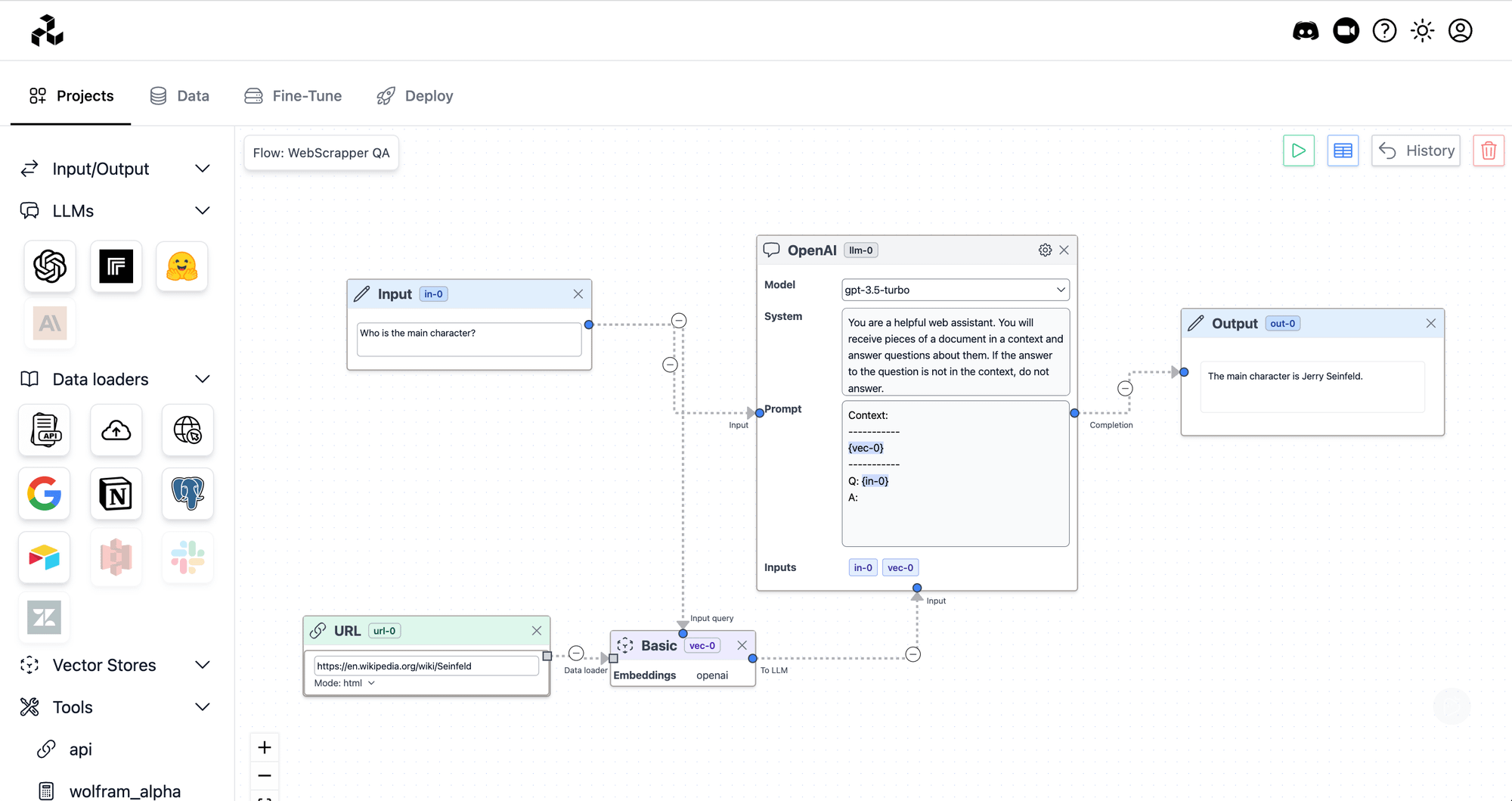

Stack AI's platform empowers teams to design, test, and deploy AI workflows effortlessly, using cutting-edge models such as ChatGPT. With its intuitive graphical interface, even non-technical users can leverage the platform's capabilities to create a wide range of AI applications, including chatbots and assistants, document processing tools, question answering systems, and content creation utilities.

No-Code Tools for Seamless Development

One of the standout features of Stack AI is its emphasis on no-code tools, enabling users to build AI applications without the need for extensive coding knowledge. The platform operates on a drag-and-drop mechanism, allowing users to easily connect various components, including LLMs, vector databases, tools, and data loaders, to create complex AI workflows. This user-friendly approach means that developers and non-developers alike can collaborate and contribute to the development of AI applications.

Connect Data from Any Source

Stack AI simplifies the process of data integration by effortlessly loading data from various sources, such as files, websites, or databases like Notion, Airtable, or Postgres. This flexibility enables users to seamlessly connect their LLM-powered applications to their preferred data sources, unlocking valuable insights and ensuring a streamlined workflow.

Empowering LLMs with External APIs

The integration capabilities of Stack AI extend beyond data sources. The platform allows users to seamlessly integrate their LLM applications with external APIs, including popular services like Google Search and WolframAlpha. This empowers LLMs to interact with the world and retrieve real-time information, enhancing the breadth and depth of AI applications built on the platform.

Jumpstart Projects with Templates

For users seeking to expedite their AI application development, Stack AI provides a diverse library of templates for common workflows. These templates serve as a valuable starting point, enabling teams to kickstart their projects and accelerate the development process. Whether it's building a chatbot or implementing a document question answering system, these templates provide a solid foundation for customization and rapid experimentation.

Experimentation and Fine-Tuning Made Easy

Stack AI understands the importance of experimentation in the AI development process. With the platform's intuitive interface, users can quickly iterate and experiment with multiple prompts and LLM architectures, allowing for rapid prototyping and refinement. Additionally, the platform offers powerful tools for data collection and analytics, enabling users to fine-tune their LLM models to maximize performance and align them with their specific product needs.

Instant Deployment for Seamless User Experience

One of the key objectives of Stack AI is to ensure that AI applications built on its platform can be seamlessly deployed and accessed by end-users. The company achieves this by hosting all workflows as APIs, guaranteeing that users can access AI-powered functionalities instantly, with minimal latency. This streamlined deployment process eliminates the need for complex infrastructure setup and maintenance, allowing businesses to focus on delivering exceptional user experiences.

Production-Ready AI Applications

Stack AI empowers teams to build production-ready AI applications by providing a comprehensive suite of tools and features. The platform allows users to compose applications by effortlessly dragging, dropping, and connecting components from a list of LLMs, vector databases, tools, and data sources. This visual approach to application development enhances collaboration and accelerates the creation of robust and scalable AI solutions.

Fine-Tuning for Optimal Performance

To ensure optimal performance and alignment with specific product needs, Stack AI offers the capability to fine-tune LLM models. By collecting relevant data and running fine-tuning jobs, users can refine their LLMs to achieve higher accuracy and responsiveness. This fine-tuning process enables businesses to create AI applications that are tailored to their unique requirements, resulting in more accurate predictions, enhanced user interactions, and improved overall performance.

Label Data for Enhanced Training

Effective data labeling is crucial for training LLM models. Stack AI recognizes the significance of this step and provides tools to streamline the data labeling process. With the platform's integrated labeling capabilities, users can efficiently annotate and label data, ensuring the availability of high-quality labeled datasets for training and enhancing the performance of their AI applications.

Continuous Model Refinement with Prompt Engineering

Prompt engineering plays a vital role in the performance of LLM models. Stack AI offers a suite of tools specifically designed for prompt engineering, allowing users to refine and maintain their models effectively. With these tools, users can optimize prompts, experiment with different techniques, and fine-tune their models iteratively, resulting in AI applications that continuously improve over time.

Robust Analytics and Monitoring

Understanding the performance and behavior of AI applications is essential for driving insights and making informed decisions. Stack AI provides robust analytics and monitoring dashboards, allowing users to gain valuable insights into the usage, performance, and impact of their AI applications. By tracking metrics, monitoring trends, and analyzing user interactions, businesses can identify areas for improvement, optimize workflows, and enhance the overall user experience.

Unleashing the Potential of Large Language Models

Large Language Models (LLMs), such as ChatGPT, have revolutionized the field of natural language processing and AI applications. Stack AI enables businesses to harness the full potential of LLMs by providing a user-friendly platform that simplifies their integration and utilization. By leveraging LLMs, businesses can create AI applications that are capable of understanding and generating human-like text, facilitating improved communication, information retrieval, and automation across a wide range of use cases.

Conclusion

Stack AI has emerged as a pioneering startup in the field of AI application development, revolutionizing the way teams build and deploy applications with Large Language Models. With its intuitive no-code tools, seamless data integration, experimentation capabilities, and fine-tuning features, Stack AI empowers businesses to unlock the power of AI and incorporate it into their products and services effortlessly. By democratizing AI application development, Stack AI paves the way for organizations across various industries to leverage the potential of AI and deliver innovative solutions that enhance user experiences, drive efficiency, and fuel growth in the digital age.