Inside Chronicle Labs’ AI Safety Platform

The rapid rise of autonomous AI agents is changing how companies operate. Businesses are beginning to trust AI systems with customer interactions, operational workflows, internal decision-making, and even mission-critical processes. Yet as companies move faster toward AI adoption, one major problem continues to grow beneath the surface: reliability.

While modern AI agents can automate impressive tasks, most enterprises still hesitate to deploy them at scale because the risks are enormous. A single unexpected action, hallucinated response, or workflow failure can disrupt operations, damage trust, or create financial consequences. For large organizations, experimentation in production environments is rarely acceptable.

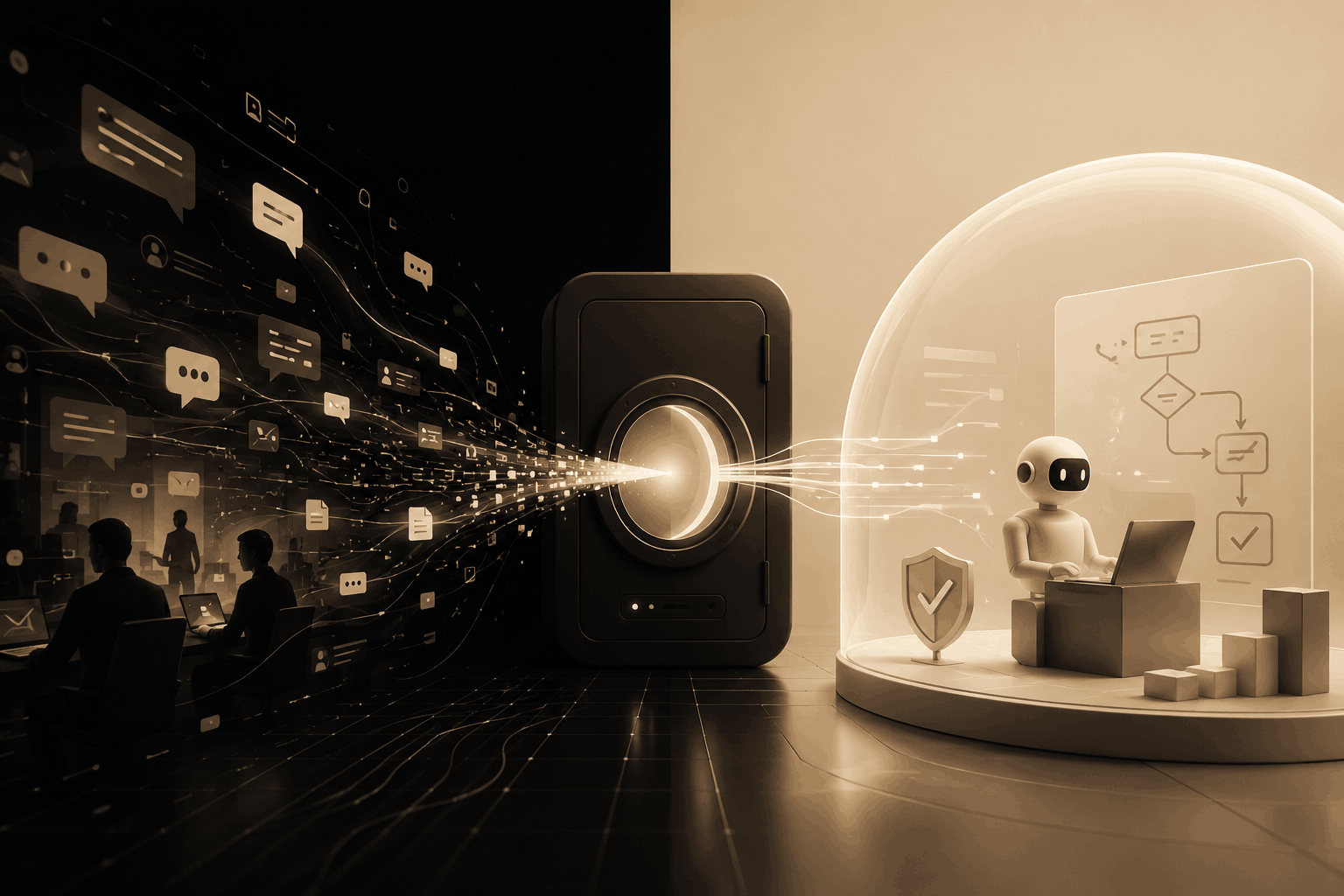

This is the challenge that Chronicle Labs aims to solve.

Founded in 2026 as part of the Spring 2026 batch, Chronicle Labs is building what it describes as a staging environment for enterprise AI agents. The company allows organizations to capture production events, replay them in controlled environments, and safely test new AI behaviors before deployment.

Instead of forcing companies to guess whether their AI systems will work correctly, Chronicle Labs provides a way to simulate operational reality.

The startup’s vision comes from a deep understanding of autonomous systems, aerospace engineering, and the importance of replayable data. Led by founders Ayman Saleh and Rowan Zyadeh, Chronicle Labs is positioning itself at the intersection of enterprise infrastructure, AI safety, and operational testing.

Why Are AI Agents Becoming a Massive Enterprise Challenge?

AI agents are rapidly evolving from simple assistants into autonomous systems capable of making decisions and executing workflows independently. Companies increasingly want AI agents to handle customer support, operations, scheduling, data analysis, document processing, and internal coordination.

However, deploying AI agents inside enterprise environments introduces a difficult problem: unpredictability.

Traditional software behaves according to predefined rules. AI agents, on the other hand, operate probabilistically. Even small changes in prompts, workflows, APIs, or company processes can produce unexpected outcomes.

For enterprises, this creates several concerns:

- AI agents may misunderstand context

- Workflows can drift over time

- Edge cases become difficult to predict

- Production testing can impact real users

- Static evaluation systems quickly become outdated

Many teams attempt to address these risks by creating internal evaluation frameworks. These “evals” typically test AI systems against fixed datasets or predefined scenarios. While useful initially, they often fail to represent the complexity of real business operations.

As businesses grow, workflows evolve constantly. Operational nuances, exceptions, and user behaviors change over time. Static testing environments struggle to keep pace with this reality.

Chronicle Labs believes the future of enterprise AI depends on solving this gap between laboratory testing and real-world operational behavior.

What Inspired Chronicle Labs to Build Replayable AI Infrastructure?

Chronicle Labs was founded by engineers who spent years working on highly sophisticated autonomous systems.

CEO Ayman Saleh previously worked at NASA’s Jet Propulsion Laboratory on programs that included the James Webb Space Telescope and the Mars 2020 Perseverance rover. His background exposed him to environments where reliability is not optional.

Space exploration systems cannot simply “fail and retry” in production. Engineers need tools that allow them to replay system states, analyze decisions, and understand behavior with precision.

According to Saleh, one of the most powerful concepts in robotics and aerospace engineering is the use of replayable logs. Tools capable of capturing a full system state at a specific point in time allow engineers to investigate behavior almost like traveling backward through time.

In robotics ecosystems, technologies such as ROS bags and replay systems make it possible to reconstruct what happened during autonomous operations down to extremely fine detail.

This philosophy became foundational to Chronicle Labs.

The company sees enterprise AI agents as a new generation of autonomous systems. Just like robots navigating Mars or spacecraft operating in space, AI agents inside businesses require environments where behavior can be tested safely before deployment.

Chronicle Labs applies lessons from aerospace-grade reliability engineering to enterprise AI infrastructure.

How Does Chronicle Labs Actually Work?

At its core, Chronicle Labs captures operational events that AI agents experience in production and transforms them into replayable staging environments.

Instead of relying on synthetic tests or manually constructed evaluation datasets, Chronicle Labs uses a company’s real operational history.

The process works in several stages:

Capturing Operational Events

Chronicle Labs records the events, inputs, workflows, and contextual information that enterprise AI agents encounter during production usage.

This creates a detailed historical representation of how the business actually operates.

Replaying Real Scenarios

The captured events can then be replayed inside controlled sandbox environments. Teams can test updated prompts, new workflows, model changes, or entirely different agent behaviors against real historical situations.

This creates far more realistic testing conditions than static evaluations.

Backtesting AI Behavior

Organizations can compare how different versions of AI agents would have behaved in past operational scenarios.

Would the updated agent have made better decisions?

Would it have failed under certain edge cases?

Would customer interactions have improved or degraded?

Chronicle Labs allows companies to answer these questions before deployment.

Creating Seeded Sandboxes

The platform converts operational history into seeded sandbox environments that mirror real business conditions.

Rather than generic testing environments, companies receive simulations tailored to their unique workflows, processes, and operational complexity.

This enables teams to validate AI behavior against the actual reality of their organization.

Why Is Replayability Becoming Essential for AI Development?

One of Chronicle Labs’ central ideas is that replayability will become a foundational requirement for AI systems.

Modern enterprises increasingly depend on AI systems that evolve rapidly. Prompts change, models update, APIs shift, workflows expand, and business processes drift over time.

Without replayable infrastructure, organizations face a dangerous choice:

- Move slowly and limit AI deployment

- Or move quickly while accepting significant operational risk

Chronicle Labs wants to eliminate this tradeoff.

Replayability offers several major advantages:

Understanding Failures

When an AI agent produces a problematic result, replay systems make it possible to reconstruct the exact context that led to the behavior.

This improves debugging and root-cause analysis significantly.

Safer Iteration

Teams can safely experiment with new behaviors before exposing real users to changes.

This encourages innovation while reducing operational risk.

Continuous Validation

Because replay environments are based on actual operational history, testing evolves alongside the business.

This prevents evaluation systems from becoming stale.

Enterprise Trust

Large organizations are naturally risk-averse. Replayability provides a level of visibility and predictability that enterprises often require before adopting new technologies at scale.

Chronicle Labs believes these capabilities will become increasingly critical as AI agents gain more autonomy.

Why Are Traditional AI Evaluations Failing Enterprises?

One of the strongest criticisms Chronicle Labs makes about current AI development practices is the overreliance on static evaluation systems.

Today, many companies build evaluation datasets manually. Teams design prompts, expected outputs, and test scenarios to measure agent quality.

While this approach works during early experimentation, it often breaks down at scale.

The problem is that businesses are dynamic systems.

Customer behavior changes.

Operational processes evolve.

New edge cases appear constantly.

Internal tools get updated.

Policies shift over time.

Static evaluation datasets struggle to reflect this complexity. As a result, organizations may falsely believe their AI agents are reliable when the systems have only been tested against outdated scenarios.

Chronicle Labs addresses this by grounding evaluations in operational reality rather than hypothetical cases.

The company’s replay-based approach enables businesses to validate AI agents against actual historical workflows, not artificially simplified benchmarks.

Could Chronicle Labs Become Core Infrastructure for Enterprise AI?

The AI industry is rapidly moving toward agentic systems capable of performing increasingly complex tasks autonomously.

As this transition accelerates, infrastructure layers surrounding reliability, monitoring, testing, governance, and observability are becoming critically important.

Chronicle Labs is entering this emerging category early.

Its focus is not on building another AI assistant or model provider. Instead, the company is building infrastructure designed to help enterprises safely operationalize AI agents.

This positioning could become strategically important.

Historically, every major computing wave has produced foundational infrastructure companies:

- Cloud computing created monitoring and DevOps platforms

- Mobile computing created analytics and deployment ecosystems

- Cybersecurity growth created governance and compliance tooling

AI agents may now require an entirely new operational layer.

Chronicle Labs appears to believe that replayability, backtesting, and operational simulation will become as essential to AI systems as staging environments became for traditional software development.

What Makes Chronicle Labs Different From Other AI Startups?

Many AI startups focus on making agents smarter, faster, or more autonomous.

Chronicle Labs focuses on making them safer and more reliable.

This distinction matters.

The company is not primarily competing in the crowded race to build better general-purpose AI models. Instead, it is addressing the infrastructure challenges that emerge once enterprises attempt real-world deployment.

Several factors differentiate the company:

Aerospace-Inspired Thinking

The founders bring experience from environments where autonomous systems require extreme reliability.

This engineering philosophy shapes the product’s emphasis on replayability and operational precision.

Operational Reality Over Synthetic Testing

Chronicle Labs uses actual historical workflows rather than artificial benchmarks.

This produces more realistic testing environments.

Enterprise-Centric Design

The platform specifically targets organizations with high operational risk and complex workflows.

These companies often require extensive validation before deploying autonomous systems.

Long-Term Infrastructure Vision

Rather than building a narrow AI feature, Chronicle Labs is developing foundational infrastructure that could support the broader enterprise AI ecosystem.

What Could the Future Look Like for Chronicle Labs?

The enterprise AI market is still in its early stages, but demand for operational reliability is growing rapidly.

As AI agents become more autonomous, companies will likely require tools that provide:

- Safe deployment environments

- Continuous validation systems

- Behavioral replay infrastructure

- AI observability

- Historical backtesting

- Governance and compliance tooling

Chronicle Labs is positioning itself directly within this emerging infrastructure category.

If the company succeeds, its platform could become part of the standard development lifecycle for enterprise AI systems.

Just as modern software teams rely on staging environments, observability tools, and CI/CD pipelines, future AI teams may depend on replay-based testing systems before deploying autonomous agents into production.

Chronicle Labs represents a broader shift happening across the AI industry: the transition from experimentation toward operational maturity.

The startup’s vision suggests that the next generation of AI infrastructure may not simply focus on making agents more capable, but on making them trustworthy enough for enterprises to rely on every day.